Enterprise Architecture Frameworks, Methods, Notations, Reference Content – some clarifications

When talking with clients and prospects who are new to the topic of EA or BPM, a lot of the key terms, such as Enterprise Architecture Frameworks, not only seem to be strange, but are also used interchangeably. This article will take the four most used terms, defines them, and will show how they are relevant in getting started with your architecture implementation program.

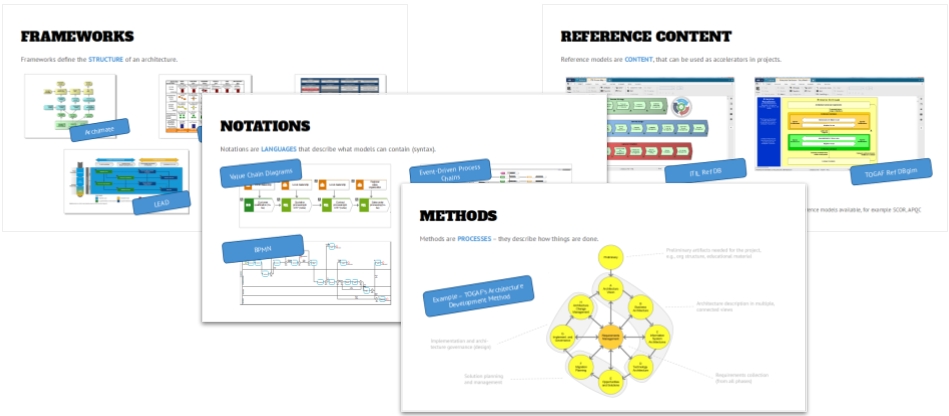

Enterprise Architecture Frameworks

This is the one term that is marketed as the “special sauce” by enterprise architecture framework providers (and they are happy to sell you training, certifications, and even license it to you), but in all reality they are seen as buzzwords and don’t have a day-to-day relevance for practitioners.

But what are EA frameworks?

Starting with the Zachman framework that stated back in 1987, through Professor Scheer’s Architecture of Integrated Information Systems (ARIS) framework and The Open Group Architecture Framework (TOGAF) practitioners in the EA space have developed a lot of different frameworks that mostly want to help with structuring the content in a way that will help users to create content in a repeatable way, and provide guidance on where to store and find this content. They typically define views and viewpoints for the content.

In addition to this, some enterprise architecture frameworks also provide a method (for example TOGAF) or a notation (for example Archimate), while others exclude these extensions and focus on being more specific on the individual artifacts (the “what”) that shall be created – however, the Department of Defense Architecture Framework (DoDAF) explicitly does not describe how things shall be depicted, but rather follow the mantra of “fit for purpose”.

This makes comparisons difficult and blur the lines between the various frameworks, while on the other hand some industries require following a specific framework to get approvals (in the US for example not only the DoD requires the use of DoDAF, but other federal and state/local governments make it mandatory to use DoDAF when implementing systems.

Why are they relevant and how should you use them?

As said above, the main benefit of selecting an EA framework to follow is the alignment of users and the expectations of where to find content that they are looking for. The overall benefit is the reduction of time for searching content, and training effort.

This will be implemented in the folder structure that, for example, has two folders (Enterprise Baseline -“what runs?”- and Projects/Solutions – “what changes?”) on the main level, while the structure below will be determined by the various views that the framework prescribes. When a project goes live, the designs that are in “Projects/Solutions” now can be moved into the Enterprise Baseline to reflect the new “what runs”, and be available for future analysis and reporting.

Methods

Methods are processes that describe how things are done. These can be related to a framework (see TOGAF), or be available as a standalone artifact. Another example might be the Scaled Agile Framework (SAFe) that not only contains the structures for scaling Agile (e.g., the “Trains”), but also includes the steps that need to be taken at each level of the concept.

What methods are available?

There is a myriad of methods out there in various degrees of sophistication. Some of them have become the “classics” and will be taught at universities -such as TOGAF- and others are proprietary, and copyrighted by a consulting firm or an industry consortium, so that you can use them only when you hire that organization for a project. Some others even claim to be open source, while their terms an conditions are in complete opposition to the four freedoms of free software – they just want to have community input that then will be included into the content that is available for their paying members.

While your requirements for methods might differ greatly I recommend to do some research and look for industry-specific methods, and ask your tool or consulting partner for recommendations. Groups on LinkedIn and other forums might also be a good place to ask for recommendations.

Once you have narrowed down what method package might be applicable for you, I suggest you look at the provider and the maturity of the method content – check if is it well documented, if training is available, or if an ecosystem exists around this methodology. Lastly, I would review the material together with your fellow architects and determine which experiences and favorites already might exist in your organization.

Why are methods relevant and how do you adapt them?

Methods can be very helpful when designing architecture artifacts, especially when you are beginning with this exercise. However, almost all methodologies need to be adapted to your organization’s needs. They might be very extensive (the TOGAF spec is 900+ pages, and very “dry”), or they might have an “old-fashioned” flavor to them – to stick with TOGAF again: the deliverables in the spec have a document focus, such as the Architecture Description Document, while your design in an architecture tool will be much more flexible and “living” than a document on (virtual) paper that nobody reads.

But the content that is described in the TOGAF spec is highly relevant and will ensure that you don’t forget important things. You just have to adapt the content to your setup, processes, and tools. My suggestion is that you will set up a methodology group that defines you organization’s standards for architecture work, that will cover the artifacts that you will create, the configuration of your tool (see structure above and the notations section below), and cover the EA Method capability that will be covered in another article.

When you implement the standards some things might seem strange to your audience, so encourage them to follow the rituals of the method. For example, the stand-ups in Agile feel weird when you get started, and so might be the fact that you don’t produce documents as designs, even though you maybe have done this in your organization for years and decades. My suggestion is to give those unfamiliar and uncomfortable things a try, and trust the method before you make changes and miss the benefits – remember: people inherently do not like to change, and people will be the biggest roadblock on your implementation road to convince and bring onboard.

The outcome should be a set of documented standards that help your users to “ease into” – don’t start with a 400 page document, so that your adoption will not be hindered by this. Include your standards into the training material for new users and provide support offerings for them (an internal forum, an email inbox for suggestions and questions, “open hours” for real-time interaction with your users). Also make them available to act as a reference and tiebreaker when decisions need to be made, and treat them as a product that follows its own release cycle and get updated on a regular basis.

Notations

Notations define the graphical representations and “grammar” for the artifacts that are created – the language/syntax. They prescribe the model types that shall be used for a given topic (e.g., lower-level processes or data models), and the objects that are used within those. In addition to this, the notations define rules which objects can be connected to which, and which attributes need to be used.

This has the benefit that database-driven architecture tools can check the completeness and correctness of models before submitting them for approval or implementation, so that missing mandatory attributes or incorrect modeling that will prevent automation can be detected, and costly rework during the implementation can be avoided. It will also reduce implementation risk, because the amount of changes during coding compared to the blueprint will be reduced.

Which notations should I use?

This is a very loaded question and you will hear a ton of different answers when you ask others. There are industry standards out there (BPMN, UML, DMN to mention a few), but the correct answer is “it depends”. Have a look at what you want to describe, and in which view the artifact lives (see the structure topic above). It also might help what your chosen tool recommends – which might not be very helpful when ARIS, for example, ships with 120+ model types out of the box. However, it also ships with a pretty good method help that will guide you, and there is an ecosystem available where you can ask deeper questions (forums, professional/consulting services)

For processes, for example, some generally accepted conventions have evolved: a few levels of Value Chain Diagrams and one level of either BPMN or EPC processes below. Which of the two latter notations you might want to choose is a matter of personal preference, ease of adoption, and tool capabilities (some features might require a certain notation – for example BPMN is required if you want to use the out of the box process compliance check in ARIS Process Mining, while the synchronization with SAP will work with either notation).

How do I implement notations?

The implementation of a notation has multiple aspects: one is the selection of notations as described above, the other is the implementation in your tool, which is part of the “Technical Governance” phase and will be described in more detail later.

The next step is to create training and support material, and communicate the benefits of using a standard language, especially if it is an industry standard notation: your stakeholders and users will learn skills that can be reused in future projects or at other employers. It will also simplify communications with other parties (other projects, business partners, vendors) significantly.

Lastly the standards group mentioned above should also solicit feedback in updating the use of notations in the field (what works/what doesn’t work?), and also look out for changes in the specifications and the use of notations in the industry, so that they can be incorporated as changes. However, switching notations completely should be a very rare event, because it might influence rework, analysis, etc. In most cases selecting a notation (vs. allowing “free form modeling”) and sticking with it is enough – you should have a very good reason for notation replacements.

Reference Content

Reference content is pre-packaged content from a vendor or other organizations, such as processes on all levels of the hierarchy. It aims to accelerate project delivery, and to implement standards and patterns that will then simplify the selection of technologies, or leading practices as a secondary benefit.

What is reference content?

Reference content is pre-packaged content from a vendor or another organization that can come in different formats – from Excel sheets, to databases in a tool vendor-specific format and proprietary tools that are built by the reference content provider.

An example of reference content is the Process Classification Framework (PCF) by the American Productivity and Quality Council (APQC). The PCF is a decomposition of process titles on 5 levels that are structured in 13 main process areas (the “what”). It includes not only a generic PCF, but also industry-specific versions which will make things obviously more interesting for customers. What it does not bring to the table is the content of the lower-level processes (the “how”) because this is determined by too many factors, such as the selection of tools, or the org structure of an organization. This type of content might be available from a tool vendor (SAP, for example) or a consulting organization (Software AG for example, offered their Industry.Performance.Ready content that not only included process models, but also a pre-configured SAP system and performance measurement as a consulting offering in the past).

In addition to the process classification, APQC also adds definitions of typically used operational KPIs to the package, and offers industry benchmark values of these KPIs as an add-on service. The PCF and the KPI definitions are freely available for download and import into your architecture tool, and APQC offers also free information about benchmarking, and how to create a baseline for your process improvement activities.

Why is reference content relevant?

Very often clients ask for the creation of reference architectures and looking for an industry-specific reference content to be used as a baseline can be a huge accelerator. In addition to this your proposed content will gain more credibility if it is based on an acknowledged provider (e.g., the tool vendor, your consulting partner), or an industry consortium. Enabling comparisons, as described in the APQC example above, is also a welcome benefit.

In some cases, e.g., with SAP, the reference content is also enhanced with additional information that enables the synchronization with an architecture tool that is used for developing the blueprint in the Solution Design phase of the project. The large consulting firms also have developed their own reference content as part of their offerings, which might be based on other reference content (APQC is a favorite here), but is enhanced by their project experience and the leading practices that they’ve seen and helped clients to implement.

In addition to this, collecting reusable patterns that are offered as reference content (or developed by yourself in your architecture tool) will allow projects to design their solutions faster, because if they adhere to the reference structures an approval by an ARB for example might be “rubber stamped”, because the due diligence for solution components might have been done already (for example a security review of a cloud provider’s offerings).

Summary

I hope that this article brought a bit more clarity to the four different items discussed, even though in all reality, things are not as clear cut as one would like it. However, standardizing the terminology in your organization will be helpful to communicate and avoid misunderstandings.

Roland Woldt is a well-rounded executive with 25+ years of Business Transformation consulting and software development/system implementation experience, in addition to leadership positions within the German Armed Forces (11 years).

He has worked as Team Lead, Engagement/Program Manager, and Enterprise/Solution Architect for many projects. Within these projects, he was responsible for the full project life cycle, from shaping a solution and selling it, to setting up a methodological approach through design, implementation, and testing, up to the rollout of solutions.

In addition to this, Roland has managed consulting offerings during their lifecycle from the definition, delivery to update, and had revenue responsibility for them.

Roland is the VP of Global Consulting at iGrafx, and has worked as the Head of Software AG’s Global Process Mining CoE, as Director in KPMG’s Advisory, and had other leadership positions at Software AG/IDS Scheer and Accenture. Before that, he served as an active-duty and reserve officer in the German Armed Forces.

This is a great overview and explains the topics well for someone getting started with their EA practice.